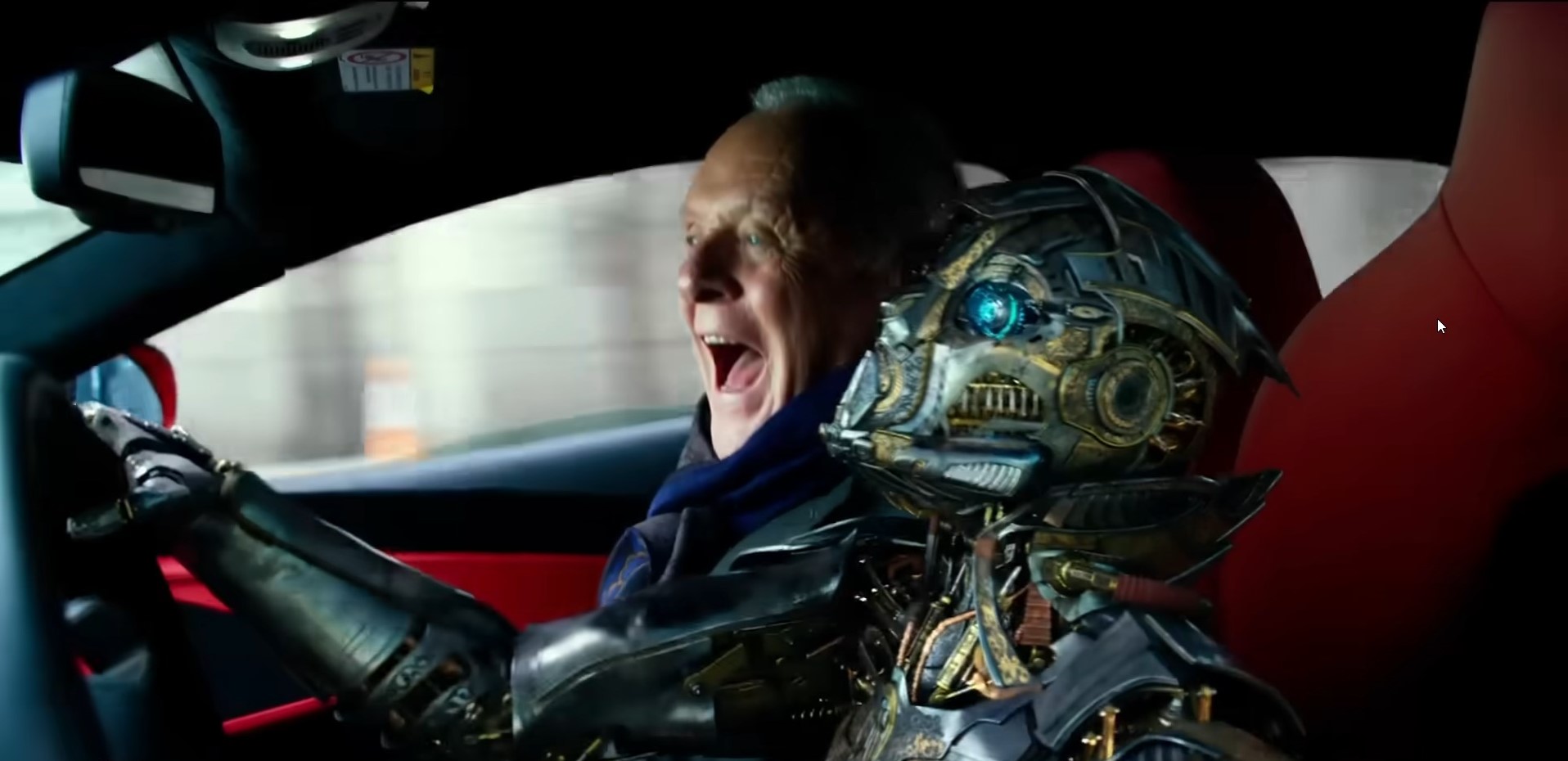

Transformers - The Last Knight

As someone who develops applications for business and pleasure, I am now required to tell a robot to develop it for me. It isn’t a hard requirement by law or anything, but the general consensus now is that if you’re not using AI tools to build software, you’re Already Behind or whatever phrase social media harps on. I hope you enjoy spending $10-$20 a month, forever, to subscribe to the coding AI you need to do work at the new expected pace!

Naturally, I tend to be skeptical of such movements. (Anything in tech that happens to coincide with a nominal montly subscription deserves your scrutiny, to be honest.) This is especially true since we’re still in the honeymoon phase of AI, where the bubble hasn’t quite popped and everyone is still competing to stand at the top of the heap of ChatGPT-app wrappers when the dust finally settles. It’s unfortunate because - like many tools - there can be legitimate value in using AI as an assistant past the sleight-of-hand misdirection and marketing fog.

I’ve been putting that to the test recently. In between other commitments, I’ve been developing a full-stack logistics dashboard application entirely through AI agents. At first I was just building one feature at a time, but then I started to create my own agents that referenced the context of the code and other agents, as I’ve tried different workflows and started to unlock the fuller power and understanding of the new development paradigm.

Others have already done this, of course. But you’ll have to forgive my innate reluctance for engaging until now - as I said before, any good that could come out of using an AI assistant for development is heavily obsfucated by the unrelenting hype driven by corporate interests and tech-heads that simply don’t know enough better to question things. Not that I’m perfect at being a skeptic, but it’s good to exercise some caution.

My verdict so far: once you get past the marketing Kinder shell to get to the toy inside, the results are fascinating and undeniable. It’s no immediately successful business created overnight as promised by people whose successful business is to promise you a successful business. But it’s startling how quickly I’ve been able to pull my ideas together and have it run. It’s easier to see it now, now that I’ve had the work occur on my computer in front of me, which makes it a little easier to trust.

I started with CursorAI, and ended up burning all of those free credits on side tools as well as the start of the dashboard. At the time I was still reluctant to pay for a subscription, so I then experiment with a local tool setup. First I ran Ollama locally on my machine using qwen2.5-coder, and for basic tasks, that was fine. But I was still limited by my machine and by checking my knowledge against the syntax the model knew. I stepped up (and gave in a little) by connecting Ollama to a qwen3-coder model on the cloud through an Ollama account. This was better, and also allowed me to experiment with ChatGPT and Deepseek models as well.

I needed it to see and work with my code, however. At this point I was still using Visual Studio, but if I wanted to tap more fully into AI, I needed context and integration. I installed the extension RooCode into Visual Studio Code and pointed it to my Ollama instance. Now we had a jump in power - I was able to mark context and actually begin architecting features, and with that insight, RooCode was able to start spitting out proper additions to the application. Better results, but still based on my local specs, and it was prone to looping and churning over poor mistakes without stepping in. Clearly, I needed more power (it’s still cool to reference Devil May Cry in 2026, I think).

Finally, after talking with other developers, I was convinced to bite the bullet and subscribe to Github Copilot. $10/month (for however long that lasts) was an easier pill to swallow for someone like me, experimenting with AI-driven development and not terribly concerned about the security of his super-important logistics visualization demo code getting out there. But the difference was sheer. Now I dipped my toes into building full agent.md files, handing over the reins to those agents to completely build my React frontend while fleshing out the API endpoints and database connections. I’ve never pulled together software this quickly before. And so far, the working skeleton I’m building functions as expected.

But it’s not magic. No tool is. The experience I’ve had so far developing purely through AI have shown me the following:

-

You still need to actually know the concepts behind what you’re building. Hopefully the dangers behind vibecoding have been illustrated by now - it’s fine for pulling together something quickly or messing around, but in the long run, actually knowing quality software design concepts and how the frameworks you’re using actually function will be vital. Currently I’ve been working on a docker-compose file to marry the individual containers for frontend, backend, and database together. Not only has it introduced me to Docker concepts beyond the basic understanding I already know, it’s exposed that I need to learn much more about Docker than I expected if I want to use AI to build a good Docker application that doesn’t break under pressure. I certainly wouldn’t jump into a technical interview without studying up on my own, that’s for sure.

-

You should still pay attention to what you’re doing. At its current level, AI is best used as an assistant you direct, and scrutinize whatever it returns. But with this magical tool that does your work for you, it’s tempting to go on autopilot. Those times I spent straining over how the code should exactly work out haven’t popped up so much when I work with AI, and without something to fill the mental space, it’s so easy to zone out and lose sight of what you’re building. More than once I’ve had to redirect myself towards checking over what the agent wrote to make sure it’s up to snuff. Good thing I meditate and I’m able to pull myself back into the present moment that way. But a mind wanders - that’s just what minds do. AI enables that more easily.

-

Focus on test-driven development. Part of this is covering your rear in case later code the bot writes interferes with code it’s already written. Part of this is due to accounting for surprise bugs and security holes that AI-written code can introduce. But I’ll also say a big part of it is another way to maintain awareness. If you’re thinking in tests, you’re maintaining that more active mindset of directing the agent as opposed to letting it fly off on its own. You’re not just telling it to write features, you’re introducing edge cases as guardrails, and you’re thinking out better software design over the long run. If you’re going to drive the robot to work, it’s better to set the roads it has to take.

As of this writing, I’m still working on version 1.0 of this application. Right now I’m studying for certifications and that’s been eating up time. But I still want to keep building this way, to find that ideal middle ground where I can make good stuff quickly, but also with the careful thought and involvement and creativity I admire. I think I’ll always want to be directly in there sweating it out - my growth there feels more hard-earned. As AI tools grow more advanced, I imagine that subtle sense of disconnect and distance from work will only grow. All the better, then, to have hobbies and work to do in real life that keep you directly rooted to the present moment in the real world.

I’d say something about offloading football to AI, but as we can tell from each year’s Madden release, clearly that hasn’t been better than the real thing.